A bit over two years ago I joined my current company as a Quantiative UX Researcher. As I’ve described before, the work involved is very similar to the data science/analyst roles I’ve had before (hence why I even managed to get the job). The biggest difference however, is being embedded within a “UX” team as opposed just a free-floating data person or on an analyst/strategy team.

I call it a “difference” because all fields have their own culture. It has a history, there’s a certain degree of shared experience and expectations between practitioners, their own jargon, and ways of getting things done and interacting with other functions. Coming from a pure data analyst/engineer background, I had no connection to ANY of that. I might have touched upon bits of the history of UX in my various management classes almost 20 years ago, from Taylorism and Scientific Management, and HCI.

The most I’ve every really thought about UX prior to this position was just “users are hitting a lot of friction around here, the experience sucks even if I use it, let’s make it not hurt to use.” All the work and discussions leading up to that conclusion, and the work that comes after, were sorta vague in my head. It just “happened”, without any intentionality on my part. That might be fine for an analyst juggling metrics and tests for a bunch of teams, but that whole process is what UX essentially owns.

So in my short 2 years of being on a UX team, here’s what I have learned thus far about this vast world I’ve accidentally parachuted in.

One thing I’ve learned is that, much like data science, UX has all sorts of preconceived notions about it. It’s not uncommon for engineering teams and project managers to think (and even occasionally say out loud!) “Oh, UX? You guys just make the UI look pretty, right?”

Hint: No.

Remember that people in UX often come from a wide array of backgrounds, including areas like Human-Computer Interaction, Industrial Design, Service Design, Sociology, Anthropology, Writing, and even Engineering.

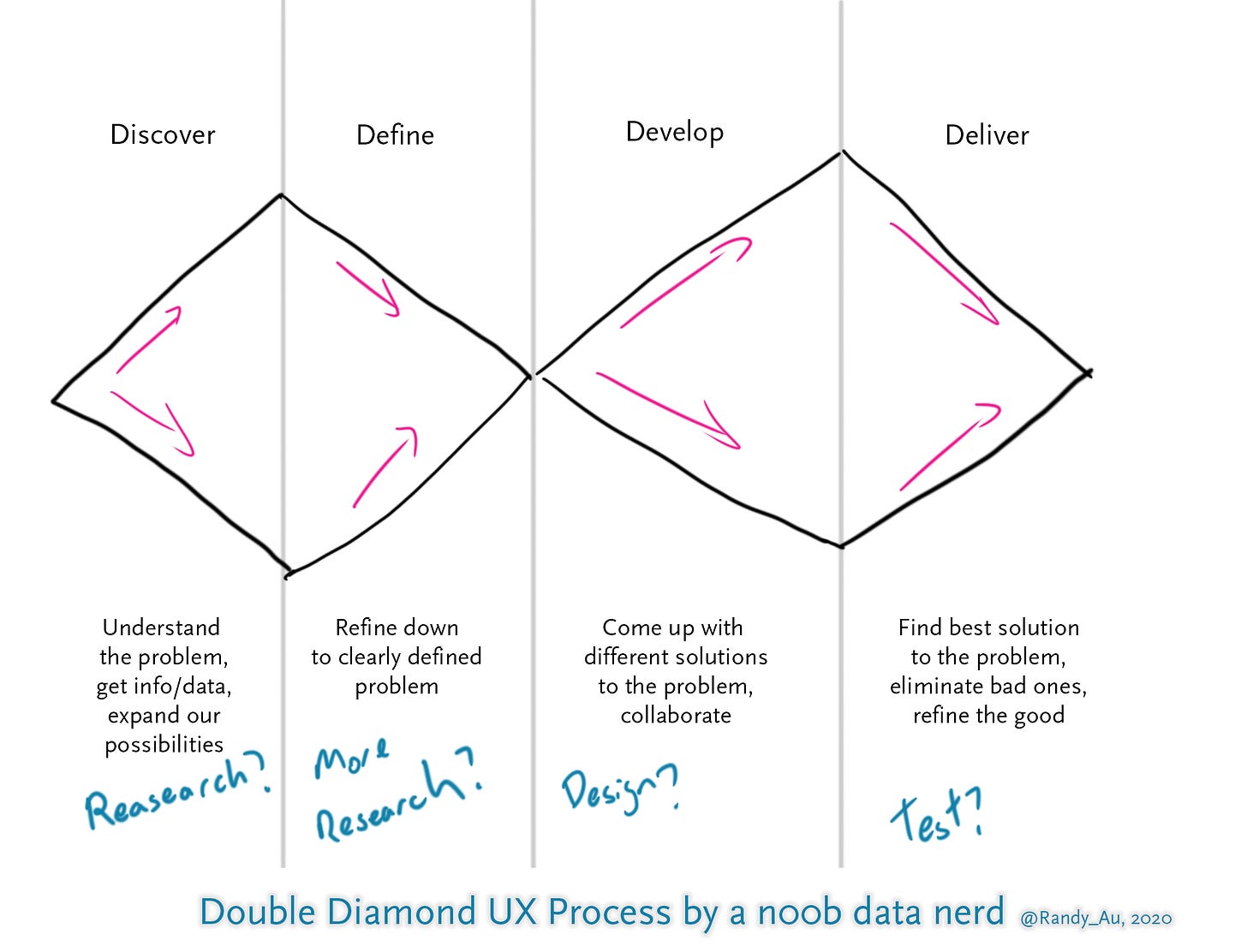

So one very common thing that all UX teams have to deal with is explaining what we do, and how we plan on working with other teams to solve product issues. While there’s there’s obviously no one-size-fits-all way of doing any sort of work in any field, it seems that one very common way to explain the UX process to people is with a process apparently created in the early 2000s called the “Double Diamond”, created by a UK group called Design Council.

It’s a process that describes not just what “UX people” does to solve a problem, but what the entire product team, everyone who works on it, does.

The Double Diamond UX Approach

I had no idea such a thing existed when I started, but apparently it was so well known within the UX community, it was something of a given. Obviously, generalized diagrams like this tend to spark a lot of refinements and discussion over the past 15 years, and even updates from the original creators.

So the idea behind the double diamond is that it’s supposed to illustrate the growing and shrinking of possibilities and ideas as a team goes through the design process in solving a UX problem. Problems could vary from huge, “we need to build a completely new product to solve the problem of loud keyboard typing at night” to fairly small, “users keep complaining our email looks ugly”.

It’s broken up into four phases of work, discover, define, develop, and deliver.

Discover

When given a problem, “users keep complaining our email looks ugly”, while your first instinct maybe to just rip the existing template apart and find a new and prettier one, randomly guessing at a solution is probably a bad idea.

The first step in the UX process is to learn why exactly are users think our email looks ugly. What does looking ugly even mean in this context. Why is it that users feel compelled to even tell us its ugly instead of just laughing about us on the open internet instead? What’s really going on here?

Normally, this phase of the work sits squarely in the domain of the UX Researcher, and in this context, it’s often a qualitative UX Researcher. There’s a lot of talking to users, asking users questions, watching users interact with the email, etc. The goal is to expand our knowledge about the problem.

It’s very easy to see that “UX Research Happens Here!”, but as a quant, I surprisingly don’t get a lot of work in this phase for a lot of questions. Quant methods require data, and are more suited towards showing/describing what’s happening, so if there’s an issue with an existing system then I can do work.

But if it’s a completely new product or something that isn’t instrumented, I have nothing to work with and we’d have to implement some form of data collection. There’s definitely things we could try, such as run surveys on potential users, or use things like MaxDiff to quantitatively tease out user preferences, etc. It’s often very different from the usual “Data scientist stuff” that I do.

Define

Once we have a ton of information and possibilities for why people are complaining about our ugly email, we can start actually defining “the real problem”. Just like how when users say “your site is hard to use” they usually mean something more like “the thing I want to get done? I can’t figure out how to do it”, we need to figure out what we actually want to solve. It’s rarely a case where the user knows exactly what the root cause of their issue is.

This is also work that often falls to UXRs, synthesizing all the data that was collected in the previous step, and then figuring out what the team should actually be addressing with further work. So we cut away all the unnecessary distractions we’ve collected and focus down on the one thing. This brings the first diamond down to a close.

Here the “Researcher” hat doesn’t really distinguish very much between qualitative and quantitative hats. It just requires the ability to synthesize data and find the patterns. While the qualitative researchers who often collect the bulk of the data are most familiar with it, anyone could find the key pattern that defines the problem.

In our example, let’s say that complaints about our ugly email was because we were using a color scheme that made things hard to see, like a weird shade of yellows and purples. Unappealing color schemes may be undesirable, but what pushed people to complain about it was the email was used for an important event registration process, and the colors made it very difficult to find some critical information.

Upon further digging, the root cause of the issue was actually because the sole designer and owner of the email has a form of colorblindness and didn’t even realize that the colors they picked don’t look pleasing together. Since it was a solo-run business, they had no feedback into what was going on.

So the core issue is that people needed the email for a critical piece of information, and the color scheme made it extremely difficult to do so. What do we do about it?

Develop

Now that we have a targeted problem to actually solve, we can go about doing it. This is where the rest of the UX functions often jump in. Everyone would have opinions and ideas for how to make the email better now that we know what the problem was.

UX teams very often will pull in people from engineering, project management, product owners, to make sure they see the problem and participate in figuring out how to best approach it. It’s not a UX-only exercise.

Designers would of course be thinking about a better color palette to use, writers could have thoughts about how to better write the email to make the critical information more easy to find.

Someone will likely ask why is such a critical piece of information only in an email and not also on the web site somewhere. Maybe we should rethink the entire interaction flow. Engineers will have ideas for how such an on-site display would work. Project managers might have seen or heard of a similar email used somewhere else that we can use as an example.

You can see how possibilities expand again.

Deliver

Finally, given all the potential possibilities that come out of figuring out and designing a solution, we need to figure out what’s the one actual solution we’re going to deliver.

This means getting input from engineers and project managers to understand what could be done within business and engineer constraints that exist, crossing off things that are impractical to even attempt. While we might want to do a full site rebuild because of this email, that won’t happen because we need to be done with this project within a month.

The UX Designers will put together various prototypes, giving shape to the ideas originally proposed. From there, UX Researchers can put those in front of users for usability testing and see how it goes. For more refined prototypes, we might go and do an A/B test in production to see how real users react. We might be doing data analysis on the prototypes to find further friction points in the process. If we’re building new stuff we’d definitely have to instrument it for analysis later.

As you can see, more familiar quant work starts appearing as we get deeper towards delivery and execution. This is where I spend the majority of my work energy since development periods can drag on (especially post-launch evaluation) and it’s common for multiple things to be “mid-delivery” in parallel. Even after something has been “delivered” there’s a certain amount of maintenance awareness that is useful to have, which we can do using good metrics.

Obviously, the UX team isn’t doing all of the hard work here at this point. By now, it’s a full-fledged development project with engineering teams and other teams dedicated to working on the issue and delivering it. UX is just one piece that provides some valuable input into the whole thing, as well as providing the ability to evaluate if we actually hit our goal.

Limitations and stuff

Not all problems are simple enough that a single pass through the above process will solve everything. Sometimes halfway along the building of prototypes, we fail to meet our objectives and have to go back to the beginning again with newly learned information. Sometimes teams just don’t want to cooperate and interact with the process to begin with for whatever reason. Sometimes we just can’t find out what the actual problem is because it’s more complicated than we originally thought.

How Quants fit into all this

As I noted before, quant work leans a bit towards the later parts of the process. There’s always places to contribute throughout the process of course, but our core strengths are most apparent when we start getting access to interesting data. It’s possible to go and collect data early on, but doing so at usable scale can be much more expensive, both in pure dollars spent and time, than a handful of targeted qualitative studies. So teams are often unwilling to take on the expense.

This is important to know because Quant UXRs are still pretty rare right now. With most UXR positions being qualitative in nature, a UX team that expects to utilize a quant much like a qual researcher is going to be a bit confused until they learn how the two types of researchers differ. They’re complementary skills and aren’t interchangable.

This also means there’s opportunity to grow in being a researcher, by learning qualitative methods and rounding out your research skill set. I’m generally poor at speaking to people and asking them questions, but I’ve actually went out to a conference and did user intercept research, in a non-English speaking country no less! While it was terrifying in its own right, it’s well worth doing just to take a step out of my little data bubble.

Differences w/ my old analyst/DS workflows

I’ve spent much of my career supporting product teams as they work on features. The fundamental work I do even now hasn’t really changed much. But one thing that UX teams do that I never really did was as an organization, they push to be involved throughout the product development process.

Having an official, management-sanctioned seat at the table right next to Engineering and Project Management means getting involved at the very beginning of a project, when people are deciding whether something is even worth exploring to begin with.

Prior to this, while I was running on solo and on smaller data teams, I had to rely on more informal relationships with project managers to get an idea of what they were thinking. When I kept tabs on what they were thinking, I could help provide data on whether some feature was worth considering. But if I get distracted by other work and don’t have those regular conversations with PMs, I could totally be surprised by a new project.

Sure, a data science team could also fight for a seat at the product development table too. I’m sure some teams in the world have done this. But since the UX team was doing it anyways because it’s integral to the overall UX process, I didn’t have to do the significant amount of work (potentially years!) to make it happen.