Character Encoding, Part 3 of 3 — Gotchas while working with Unicode

Off by one errors are common in programming, right?

Off by one errors are common in programming, right?

We’re done! (Photo: Randy Au)

So after I published the original two character encoding articles (Part 1 and Part 2 here), a couple of friends chimed in that I left out an important part of the story. Unicode edge cases and gotchas! And they’re right. While using this stuff is simple on the surface, the real world is full of surprises.

This is a topic that goes much deeper into the technical usage of text than my own personal usage, so I’ll be relying on a lot of sources and people much more familiar with text and character encoding than I am.

Here we go, in no particular order.

Comparing Composed & Precomposed Characters Is a Bad Idea (Unless You Normalize)

Unicode allows for characters to be composed (such as adding an accent mark to a letter). It will also have precomposed characters (the letter with the accent mark already attached as a single character). They look exactly the same, and Unicode considers them semantically the same. These things occupy different code points, and if you’re comparing strings naively with bytes, you’re going to get the wrong answer.

You can also have multiple accent marks on a character — the ordering of the marks doesn’t usually matter for the display of the character, but a dumb computer that compares bytes will see a difference. All of this is bad.

Because Unicode considers such cases to be semantically equivalent, it provides a way to normalize strings so any string can be compared to a similarly normalized string and yield consistent results.

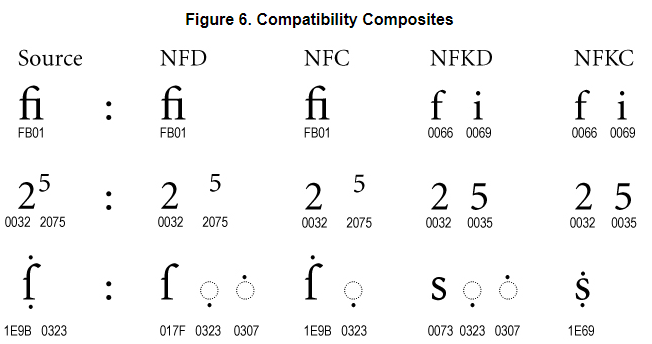

Example of the forms of normalization, NFD, NFC, NFKD, NFKC. Source: Unicode

Officially, there are four forms: NFD-canonical normalized form decomposed, NFC-canonical normalized form composed, and NFKD and NFKC are normalized form (k)ompatibility versions. Compatibility versions will, among other things, break out features like subscripts and ligatures and treat them as separate characters.

For the most part, it doesn’t really matter which of the four normalization forms you use to compare strings, so long as both strings use the same normalization. The operations are idempotent so it doesn’t hurt to make sure the normalization you’re using is correct. All sorts of chaos can happen when systems make contradicting assumptions about normalization.

Some gotchas I’ve found:

OSX uses its own flavor of normalization where certain ranges aren’t normalized correctly. The iconv tool specifically has a UTF-8-mac encoding just for this. Some details here.

Normalization may not be stable for concatenation. Normalized(X+Y) is not necessarily the same as Normalized(X) + Normalized(Y). So be careful when you call normalization. Luckily, the process is idempotent so running it multiple times only costs you some computation. Details.

Normalization to composed forms (NFC/NFKC) may sometimes make strings longer! This is because Unicode declared version 3.1 to be the “composition version” for compatibility reasons. New compositions are discouraged, and those that do exist may take a composed character and break it into the 3.1 plus a combining character.

Unicode Can Encode Practically Anything — This Includes Mojibake

Mojibake is when a computer uses the wrong encoding to convert bytes into human-readable characters. This means that mojibake, while nonsensical from a human standpoint, is actually composed of valid characters in some language, albeit in a useless order.

Some example mojibake

This means that if someone were to take an incoming stream, interpret it using the wrong encoding, and then transcode it to Unicode — Unicode will happily accept the sequence of characters and store them as-is. Since detecting legacy encoding schemes can be somewhat error-prone, and not everyone understands the finer points of text encoding, this leads to situations where corrupted incorrect Unicode will enter a dataset.

Luckily, someone has created a way to try to fix such issues. Robyn Speer created a python library named ftfy that uses many heuristics to guess when some character sequences look out of place for the context and attempts to correct them. It’s super useful if you’re doing a lot of text-heavy work and aren’t sure about the quality of the data.

I highly recommend peeking at the well-commented source code to see how it balances considerations, like the possibility that there are intentional math equations and “eye-like/nose-like” symbols for emoticons as opposed to “real mojibake.”

Thanks to Igor for pointing out that there’s a package that fixes this particular edge case. I don’t work with text as much and wasn’t aware.

String Length With Unicode Is Quirky

Notionally, the length of a string is the number of characters in the string. Except since UTF-8 is variable-width, UTF-16 has surrogate pairs, and Unicode has combining characters that aren’t independent characters themselves, you can’t simply just count chunks of bytes to get the length of a string. Except that’s how many legacy string length calculations are done.

Unless you’re specifically using proper Unicode-aware length functions, you might get weird answers. For example, JavaScript internally uses UTF-16, but it can give interesting length results because of how it counts. It counts single surrogate pair characters as two characters instead of one. You can’t even count on normalization to minimize the length of the string in bytes because, as stated above, normalization can sometimes make strings have longer sequences of code units!

In general, once you get into Unicode, string length is a hazy concept at best. You’ll probably want to avoid it.

Beware of Broken Unicode Implementations, They’re Out There

The number one recommendation for when a developer is thinking about implementing Unicode is simple: don’t. Use an existing library instead. So it’s quite rare to come across a dodgy implementation of Unicode.

Then, there’s MySQL…

Due to MySQL’s long-held tradition of being fast without necessarily being correct, it changed its original “utf8” (based off a 6-byte UTF-8 in RFC 2279) encoding to have a maximum of three bytes in 2002. UTF-8 (RFC 3629) can have a maximum of four bytes, which means practically everything outside of the Basic Multilingual Plane (BMP) is going to error.

Currently, in MySQL 8, UTF-8 is still declared to be “an alias for utfmb3”. The correct form of UTF-8 that works with four bytes is called utfmb4. That’s what you should be using. For backward compatibility reasons, this situation will likely never change.

More details on the whole MySQL (and MariaDB) UTF-8 craziness can be found here.

Similarly, Make Sure the “UTF-8” Label Is Really UTF-8

Oracle similarly has non-intuitive character encoding parameters, and the Unicode 6.2 compatible UTF-8 is called “AL32UTF8” while their “UTF8” is actually Unicode 3.0 compatible, CESU-8 compliant. CESU-8 is a variant of UTF-8 that uses two 3-byte sequences to emulate the UTF-16 surrogate pairs to access the higher character planes. This is similar to the 6-byte UTF-8 MySQL originally was compliant with.

Java also has a “Modified UTF-8” that behaves sorta like CESU-8 but has some different logic revolving around null characters. It also supports standard UTF-8. Usually, you can use UTF-8 as normal, but Modified UTF-8 can catch you off-guard in some situations.

Sometimes, Even Well-Used Libraries Can Have Bugs

The fact that many different types of scripts exist in Unicode, but the vast majority of the world uses a very small subset, leads to interesting bugs even in libraries from very big software companies.

One example is the “characters of death” that affected iOS. In this case, the Unicode itself was fine, but it uses Indic script. Those scripts have some unique rules for combining characters and diacritics to represent certain consonant-vowel sounds. This caused an issue with the font rendering engine, causing a crash. Either way, certain sequences of characters crashed iPhones everywhere until the bug was fixed.

Character Detection Will Betray You in Weird Ways

Remember how in Part 1, I showed how character detection can be fooled, especially on short strings? Well, while researching this, I found a great example, with a hilarious Wikipedia page called “Bush hid the facts.” This refers to a bug where charset detection takes text written in notepad in a certain way, detects it as Chinese, and converts it to nonsensical Unicode.

Windows, in general, has had support for Unicode very early on, back when it was USC-2 (which predated UTF-16 but doesn’t support surrogate pairs and so can only represent BMP characters). This long history of being built around UCS-2/UTF-16 meant there’s some weirdness revolving around UTF-8.

Remember That You Still Need the Right Fonts

Unicode encodes graphemes, but the final step of displaying the grapheme to a user through the use of a glyph is a separate issue. You can indicate as many characters for display as you want, but if your end-user doesn’t have the font, or has some weird broken font, they’re still not going to see what you’re sending to them.

These days, systems are fairly good about having enough of a selection of fonts to be able to render most text. The main issue is when new characters get added in — oftentimes emoji. This is why while a new emoji might be declared on the Unicode standard, it may take some time for it to work its way into your phone. The fonts need to be updated with the new glyphs.

Super Props to FakeUnicode on Twitter

Not being familiar with Unicode until just recently, I asked this great Twitter account if there were any interesting gotchas, and boy did I get a bunch! I’ll summarize here, but you really should check out the thread because I can’t really do it justice without just copy-pasting the whole thing.

Sorting by code units (the bytes) is generally a bad idea (Unicode specifically recommends you use the Unicode defined collation algorithms). The surrogate ranges are in the BMP and some characters are very distant from others.

In some languages, going from upper case to lower case is not a reversible operation.

Unicode specifically says you can’t pad code points with 0’s (well, it specifies that they need to be unique, effectively precluding leading zeros) Some programs don’t care about these “overlong encodings” — vim is one such program.

And whatever… this… is:

There’s more craziness out there. I’m sure of it. But this list is already long enough and I can stand to not think about Unicode for at least a month. So I’m gonna end it here!

Extra Reading

Finding documentation for these off-beat quirks surrounding Unicode is hard. But here are a few aside from the ones I’ve already linked to in the main body:

Globalization Gotchas - Macchiato

I'm preparing a presentation for the next Unicode conference in March, and have been thinking about doing one on the…www.macchiato.com

Dark corners of Unicode

I'm assuming, if you are on the Internet and reading kind of a nerdy blog, that you know what Unicode is. At the very…eev.ee

Some gotchas about Unicode that EVERY programmer should know

Edit descriptionnukep.github.io

Thanks?

I wholeheartedly blame these two people for goading me into writing this third piece to begin with. It’s all your fault.

TJ Murphy

The latest Tweets from TJ Murphy (@teej_m). Data nerd. Previously @imgur, @minted, @minomonsters, @zynga. I made a…twitter.com

Igor Brigadir

The latest Tweets from Igor Brigadir (@IgorBrigadir). PhD @insight_centre @ucddublin. Machine Learning, Natural…twitter.com