It honestly feels very weird to be writing in the midst of a new war of aggression breaking out in Ukraine and ongoing threats of escalation. I have nothing new to add to that conversation, but hope that we see the return of peace with as little tragedy as possible.

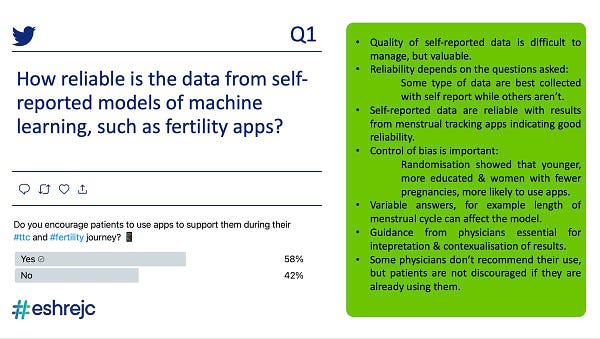

This week I somehow got pulled into a Twitter discussion that was being run by the ESHRE (European Society of Human Reproduction and Embryology) journal club (hence the hashtag #ESHREjc). They were having a discussion about a recent paper about using AI/ML methods to help predict pregnancy for couples who are trying to conceive.

You can see the hashtag activity with this time-boxed saved query here.

At a very high level, the paper collected data (self reported) from people seeking to get pregnant and checked if the patients did in fact get pregnant 6 and 12 months later. They then took the data they had on all the patients and used ML to create a model for predicting pregnancy, some models they used included logistic regression, SVM, boosted random forests, etc.. They managed to perform better on their Area Under Curve metric than similar attempts by other papers, and thus were using it to show that such methods is potentially viable for this sort of work.

Somehow I got pinged into the discussion because someone felt I had expertise in AI. I don’t particularly claim to have such expertise, but I do have some cautious opinions about the general topic, so I took a skim of the paper and very respectfully did my best to add something to the discussion. But overall I watched from the sidelines since I don’t have any familiarity with fertility studies, nor a burning desire to rant about AI.

The interesting thing about the conversation is that a lot of camps were represented in the discussion. There seemed to be some AI/ML folk in the discussion (a number of which had likely been pinged into the conversation like I had been, or twitter followers of those folk. There were fertility researchers who may or may not have experience with ML methods. There were physicians and practitioners who had their own views about whether an AI tool would help them in their actual work.

With the exception of the AI folk who aren’t from the field of fertility or medicine (and thus were brought in specifically for our opinions on AI), the rest of the people who were in on the discussion seemed to have a very broad range of familiarity with AI. Some were familiar and have used similar methods before, some were busy being physicians and thus had very little knowledge.

The opinions also varied quite a bit, ranging from very optimistic, to overly cautious, maybe a bit of skepticism. There seems to be a recognition that tools developed from AI have the potential to be useful, but there was plenty of people who had a healthy skepticism as to the extent and form such usefulness would take.

But, man, with so many fields of study in plan, having a coherent discussion where everyone’s on the same page is a brain bender. At one point, I was raising a question about whether the use of self-report data used to create the base dataset in the paper being discussed would potentially add risk to the models being overfit (since self-report data always has very peculiar biases to it), when someone chimed in from the side that since the models were built using cross-validation, thus we *know* the models aren’t overfit.

Perhaps I had made a mistake and should’ve used the more correct term of ‘generalizability’ above, but I was in the headspace of the general algorithm family of curve fitting techniques being led astray by the self-reported data. Meanwhile, this person who seems to chimed in about cross-validation just trots out the old (? I hope it’s old?) and dangerous fallacy that the technique somehow magically guarantees that overfitting is solved. If that were true I’ve got a lot of spuriously correlated datasets to show Skynet.

Anyways, back to the point. What wound up happening was that there was a nice cluster of people having a pretty intelligent and nuanced discussion about the issues involved in using AI/ML methods in fertility research. Some people were highlighting that there are inherent limitations that no amount of AI will help:

As this person states, we can AI all we want, but [lots of biological/clinical stuff] won’t change. Other folk were stressing the need for caution since such models are touching upon the lives of people.

There were people hopeful that the ML algo might’ve found some new patterns that, with the help of causal modeling and similar methods, might lead to better research. Physicians wanted to see it lead to personalized counseling or use it to give patients more context.

Meanwhile, AI folk would be trying to explain some of the positives and negatives around creating and wielding the AI hammer, something of an attempt at shedding light upon what’s typically presented as a black box of magic.

But watching the conversation around AI and health, it was like peeking into the tower of Babel. A lot of people were talking about the same thing, but I’m honestly not sure how much was connecting or not. I certainly couldn’t follow much of the discussion about the clinical aspects. While there were people discussing issues around data collection and bias, I’m not sure how many people followed those discussions beyond the surface level either.

The overall sense that I got from the whole experience was that everyone has a loooot to learn from each other. People who are writing, reading, and evaluating papers will one day have to become somewhat familiar with the part of AI literature discussing pitfalls and ethical concerns. Otherwise the situation becomes one of “let’s throw data at algorithms!” which never works out well. Meanwhile, plenty of AI folk need to do the same.

Then, we all definitely need to build a shared way of talking about these things. Everyone’s using jargon for things like overfitting, bias, sampling, metrics, which carry different shades of meaning depending on where you’re from. So people think they’re communicating clearly, but there’s concern that people might be talking past one another.

Meanwhile, I’m just sitting here on the side doing what I always do, trying to get people to be hyper aware of how data is collected.

No one sent in anything for me to share/review this week. So if you have anything for next week, send them on over~

Standing offer: If you created something and would like me to review or share it w/ the data community — my mailbox and Twitter DMs are open.

About this newsletter

I’m Randy Au, currently a Quantitative UX researcher, former data analyst, and general-purpose data and tech nerd. Counting Stuff is a weekly data/tech newsletter about the less-than-sexy aspects about data science, UX research and tech. With excursions into other fun topics.

All photos/drawings used are taken/created by Randy unless otherwise noted.

Curated archive of evergreen posts can be found at randyau.com

Supporting this newsletter:

This newsletter is free, share it with your friends without guilt! But if you like the content and want to send some love, here’s some options:

Tweet me - Comments and questions are always welcome, they often inspire new posts

A small one-time donation at Ko-fi - Thanks to everyone who’s sent a small donation!

If shirts and swag are more your style there’s some here - There’s a plane w/ dots shirt available!