A while back, while I was having some Twitter conversations about data cleaning and generally funky data sets, someone suggested that the MARC from the Library of Congress was a fertile field for shenanigans.

This week I finally started looking at the data and… oh my… This might take more than a week to work through. Hopefully y’all will have fun coming along the journey with me because this one is… a heck of a trip.

Data acquisition and basic setup

First, where do we get the data?

The Library of Congress distributes their records as a paid subscription service. If you need the most up to date catalog information (the latest is from 2019 as of this writing in early/mid 2021), you’ll have to fork over money for it.

HOWEVER, they released a huge collection of record data from 2016 for open access that you can obtain.

You can get the data, and also the check prices, on the LoC’s MARC distribution services page. Scroll down slightly for the “MARC Open-Access” data. I’m primarily going to download the “Books All” data set, but there’s lots of other stuff available.

> wget https://www.loc.gov/cds/downloads/MDSConnect/BooksAll.2016.combined.utf8.gz

Length: 2147483648 (2.0G) [application/x-gzip]

Saving to: ‘BooksAll.2016.combined.utf8.gz’

2021-05-14 15:44:55 (3.27 MB/s) - ‘BooksAll.2016.combined.utf8.gz’ saved [2147483648/2147483648]

> gunzip BooksAll.2016.combined.utf8.gz

gzip: BooksAll.2016.combined.utf8.gz: unexpected end of file

# ?!?!?!?!?

# Well that EoF error was definitely unexpected...

# Records are in a binary format so it probably just doesn't play well with gzip looking for an EoF symbol

# So we'll just brute force dump that data out

> cat BooksAll.2016.combined.utf8.gz |gunzip > BooksAll.2016.combined.utf8

gzip: stdin: unexpected end of file

# Same error, but this leaves a file to examine

> wc BooksAll.2016.combined.utf8

0 490014896 6901494696 BooksAll.2016.combined.utf8

# Whatever's in here, there's 6.9 billion character's worth of it, and no newlines.The data itself is in a very specific format called “MARC 21”, and we’re going to need some help making sense of the data without building a parser from scratch. Luckily, there are packages that handle this, pymarc being one of them.

> pip3 install pymarc

Installing collected packages: pymarc

Successfully installed pymarc-4.1.0With pymarc we should be able to parse and understand these files… so let’s take a look at what’s inside…

> import pymarc

> fn = 'data/Booksall.2016.combined.utf8'

> ifile = open(fn,'rb')

> reader = pymarc.MARCReader(ifile, to_unicode=True)

> i = 0

> for x in reader:

> i+=1

> print(i)

7922609

# So apparently there's's 7.9 million records in this data set

# reopen file

> ifile = open(fn,'rb')

> reader = pymarc.MARCReader(ifile, to_unicode=True)

# just read the next entry off the file iterator

> record = reader.__next__()

> print( record)

=LDR 00720cam a22002051 4500

=001 \\\00000002\

=003 DLC

=005 20040505165105.0

=008 800108s1899\\\\ilu\\\\\\\\\\\000\0\eng\\

=010 \\$a 00000002

=035 \\$a(OCoLC)5853149

=040 \\$aDLC$cDSI$dDLC

=050 00$aRX671$b.A92

=100 1\$aAurand, Samuel Herbert,$d1854-

=245 10$aBotanical materia medica and pharmacology;$bdrugs considered from a botanical, pharmaceutical, physiological, therapeutical and toxicological standpoint.$cBy S. H. Aurand.

=260 \\$aChicago,$bP. H. Mallen Company,$c1899.

=300 \\$a406 p.$c24 cm.

=500 \\$aHomeopathic formulae.

=650 \0$aBotany, Medical.

=650 \0$aHomeopathy$xMateria medica and therapeutics.

What is with all these symbols?!

Right on the initial data download page, you’ll notice a very curious sentence: “Records are available in two file formats - UTF8 and XML.” Us data science nerds would go “huh?” because UTF-8 is a text encoding, not something we typically consider a “file format”. But no, the people at the LoC aren’t being silly, It seems they are using specialized terminology unique to their field.

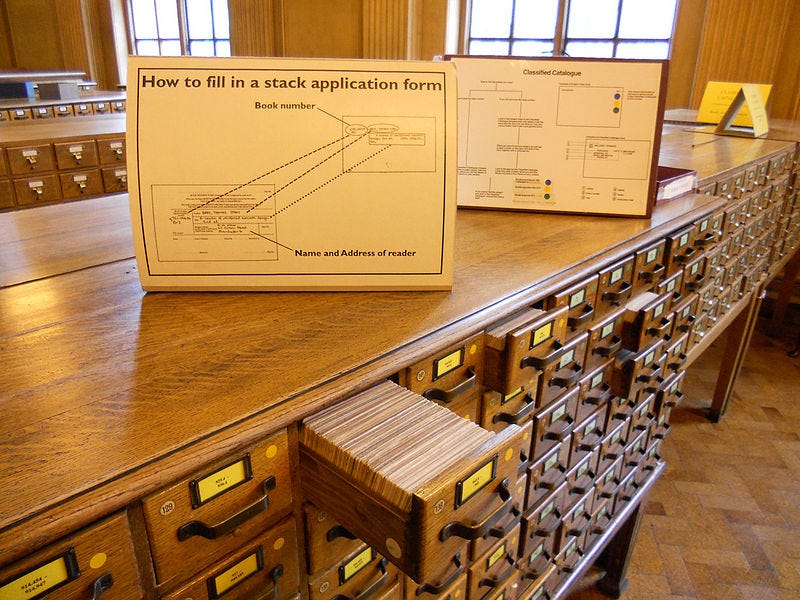

Librarians all work with the “MARC” file format, which stands for “MAchine-Readable Cataloging”. It’s the international standard for recording and transmitting catalog data. What do they mean by “catalog”, you ask? Why, the library catalog! All the little cards in the library that describe all the items in the library, what the item is, who created it, when it was created, titles, authors, publishers, subjects, etc..

Hopefully I’m not the only one here old enough to have learned how to use these cards back in school…

Historically, MARC was an electronic format created in the 1960s that was used to create and share the information on these cards. (Here’s a brief article about the woman who developed the system, Henriette Avram). As the cards themselves have slowly become obsolete, the MARC format and software behind it continues to live on.

The most current version of MARC, called “MARC 21” (with 21 meaning “for the 21st century”) was created in 1999 (with the most recent updates in December 2020). It’s important to note that the system still strongly shows it’s 1960s roots, where computation and storage capabilities were extremely limited, resulting in a really terse binary format that we saw in the code snippet above.

MARC 21 is actually a bunch of standards

At first glance, it would seem like we’re dealing with one big complicated standard, but life is even more complicated. The MARC 21 format in use by the LoC is actually a collection of standards with a long history.

Standard layer 1 — ISO 2709

First, the structure of the format follows “Format for Information Exchange” ISO 2709. This standard defines a record as the following:

The first 24 characters is the Record Label, or in the LoC’s spec, the “Leader”. This gives lots of information about the record, including its total length, encoding, length of subfields, etc.

Then, the “Directory” of a maximum of 12 characters, that communicate information on how the subsequence variable field data is (length, starting position, etc)

The data fields, these are the variable-length. They’re primarily identified by 3-digit codes in MARC 21.

So as you can see, ISO 2709 just sets the basic ground rules for what a record looks like, it doesn’t actually mention anything about the actual content of the records. For that, the MARC 21 standards come into play.

Standards layer 2 — MARC 21

MARC 21 defines much of what the various field codes mean. To do that, they first split into five standards, MARC 21 for Bibliographic data, Authority data, Holdings data, Classification data, and Community Information.

Why have 5 standards? Because the needs of each kind of data differ. Since all the fields are designated by 3-digit codes like “245”, each type of data could use a different meaning for the same code.

We’ll be focusing on bibliographic data here today, but here’s some common data field labels, pulled from the standard summary. There are over 200 different fields available.

100 - Personal names

110 - Corporate name

245 - Title Statement

300 - Physical description

306 - Playing time

700 - Added Entry — Personal name

710 - Added Entry — Corporate Name

On top of this each field can have subfield data. For example, field 100, the main entry for Personal names of an item (often for the person chiefly responsible for the work) has this internal structure:

There are subfield codes like $a, $d, etc. that represent extra data within the field.

So in our example name field from the beginning, =100 1\$aAurand, Samuel Herbert,$d1854-, we know that “Aurand” is a personal name written surname first. The name is associated with the date 1854. That helps links the name “Aurand, Samuel Herbert, 1854” to an authority record. (In our typical computer parlance we’d say “Aurand, Samuel Herbert from 1854” is the “canonical name” for this particular author. Librarians seem to use the term “authority” for this meaning.). Incidentally, you can use this site to search for authority records.

As you can see, the MARC standards are super flexible. There’s room for hundreds of fields, and within them you can have a bunch of subfields. Since the definition of the codes rests in the libraries that use the system, and everything is just character strings, you can put just about level of detail into a record.

Standards Layer 3 — Outside standards

Despite having the data structure and the fields defined, this is still not enough information to fully characterize records! The introduction of the MARC 21 format helpfully adds this:

The content of the data elements that comprise a MARC record is usually defined by standards outside the formats. Examples are the International Standard Bibliographic Description (ISBD), Anglo-American Cataloguing Rules, Library of Congress Subject Headings (LCSH), or other cataloging rules, subject thesauri, and classification schedules used by the organization that creates a record. The content of certain coded data elements is defined in the MARC formats (e.g., the Leader, field 007, field 008).

Yup, the actual content of the various fields are also controlled. As anyone who’s ever had to put together a data dictionary learns, you can’t let everyone tag everything independently however they want. It inevitably leads to chaos and confusion, forcing someone to come in after the fact to standardize the mess.

To prevent this chaos, things are filed in standardized ways. One such way is under subject headings so that everything that’s about a single topic can be found under a single set of words/topics. It vastly improves the information search process because blind keyword search is going to miss a bunch of synonyms and things.

The Library of Congress maintains the LoC Subject Headings, which you can search through here (or here. I have no idea why there are multiple places for this…). For example, this is the entry of “Harbor Masters” so you should be able to use this topic to find entries about harbor masters within the collection data.

All these other rules and standards are WAY out of scope for my brain and this newsletter post so we’re just going to skip over them today. For the purpose of the LoC, it seems that they work under the Anglo-American Cataloguing Rules 2nd ed. (AACR2) which is used by the US, Canada and UK library associations, and the Library of Congress Rule Interpretations (LCRI), a set of opinions and interpretations of AACR2.

Okay okay, now that we know how complicate this is, how can we make use of it?

pymarc has some helpful functions like record.title(), and record.author(), for accessing the most common stuff. But the vast majority of the MARC 21 format is only directly available by direct access. Luckily it provides a simple accessor function for that.

> print (record.author())

Aurand, Samuel Herbert, 1854-

# note this helpfully adds the $d subfield

> print( record['100'])

=100 1\$aAurand, Samuel Herbert,$d1854-

> print( record['100']['a'])

Aurand, Samuel Herbert,You can even convert whole record into a dictionary structure, but it comes out as a rather awkward list of individual dictionaries for each field. This makes iterating through everything rather awkward since you have to walk it all to find fields of interest.

> record.as_dict()['fields']

[{'001': ' 00000002 '},

{'003': 'DLC'},

{'005': '20040505165105.0'},

{'008': '800108s1899 ilu 000 0 eng '},

{'010': {'subfields': [{'a': ' 00000002 '}], 'ind1': ' ', 'ind2': ' '}},

{'035': {'subfields': [{'a': '(OCoLC)5853149'}], 'ind1': ' ', 'ind2': ' '}},

{'040': {'subfields': [{'a': 'DLC'}, {'c': 'DSI'}, {'d': 'DLC'}],

'ind1': ' ',

'ind2': ' '}},

{'050': {'subfields': [{'a': 'RX671'}, {'b': '.A92'}],

'ind1': '0',

'ind2': '0'}},

{'100': {'subfields': [{'a': 'Aurand, Samuel Herbert,'}, {'d': '1854-'}],

'ind1': '1',

'ind2': ' '}},

{'245': {'subfields': [{'a': 'Botanical materia medica and pharmacology;'},

{'b': 'drugs considered from a botanical, pharmaceutical, physiological, therapeutical and toxicological standpoint.'},

{'c': 'By S. H. Aurand.'}],

'ind1': '1',

'ind2': '0'}},

...On the bright side, the module helpfully parses out the various unnecessary control symbols in the data, making it significantly easier to to read. But despite having the ability to read these data files right now, they’re effectively just binary logs and we need to create a light ETL process to convert it into something that’s more useful for our purposes.

So what’s the plan?

Figure out a couple of research questions to build an ETL towards

Learn enough about the different fields so that we actually know how to use them, this is probably the hardest part

Slap the data into a suitable data structure and attempt to do the analysis

Since even getting to this point took a couple of days of catching up on information science and cataloguing, I barely have any time to understand the fields enough to build interesting questions. So for this week, I’m just going to try to figure out the growth of the LoC collection over time…

Even figuring out that question is not as simple as it may first seem. For starters, what field is the correct one to use for the time plot?

# List of fields that mention Dates..

005 Date and Time of Latest Transaction

033 Date/Time and Place of an Event

045 Time Period of Content

046 Special Coded Dates

263 Projected Publication Date

362 Dates of Publication and/or Sequential Designation

363 Normalized Date and Sequential Designation

518 Date/Time and Place of an Event Note

# But guess what! None of those seem correct (except perhaps 362 and 363). Instead the correct choice seems to be here

Field 008 detailed documentation

Character Positions

All materials

00-05 - Date entered on file (Computer-generated, six-character numeric string that indicates the date the MARC record was created. Recorded in the pattern yymmdd.) <-- SURPRISE! Y2K TRAP?!

06 - Type of date/Publication status (a single letter code)

07-10 - Date 1

11-14 - Date 2

# Date 1,2 depending on the codes in character 06, can mean things like B.C. dates, date ranges, a detailed year or date, dates for editions and reprints, etc... Handling these in a useful way is difficult... Sometimes they point to other fields.Here’s a first pass:

yy = {}

for x in reader:

try:

for f in x.as_dict()['fields']:

if "008" in f:

d = f['008']

# ugh, screw this, read the first 2 characters of that date field to see how it's going

yy[d[:2]] = yy.setdefault(d[:2],0)+1

except:

print(x)

#we need this raw exception here to catch the last entry that throws a None =\

import pandas as pd

df = pd.DataFrame.from_records([(k,v) for k,v in yy.items()])

df.sort_values(0).reset_index(drop=True).plot()

Plotting the first two yy character values of the 008 data field… looks very peculiar… The data starts ramping up at “68”… 1968 is when MARC was first created, so it makes sense. The docs say this field is typically system generated, so I'm operating under the assumption that this is when a record is catalogued into the system.

Then the counts ramp up and every year a few hundred thousand entries are added every year (at a surprisingly steady pace!) It then tapers off at “04” and “05”. There’s random stray values in the middle, like 18, 22, etc… and it’s not clear what those mean without more digging.

On the bright side, it seems like we’re safe from Y2K issues in this specific data field for another ~40 years until 2068 rolls around. Hopefully they update the format and migrate the millions of records in existence before then.

The other peculiar thing I've noticed is that the data is from 2016… things have tapered off by “04”… It’s not clear what causes this. I suspect it may have to do with the speed the LoC can process and catalog items. The data is said to range from 1968 to 2014…, so I'm wondering if my data set is somehow flawed and truncated (the EoF error), or if there is a significant cataloging backlog or something. It's also not clear if I'm using the correct date field for this task.

I’ll have to read up on all of this, unless someone can point me to some good reference materials.

We’re not done, where are we going?

There seems to be an entire mountain of understanding I need to climb up before I can work with this data. I’m not exactly sure how far I want to go with it. But the situation presents a really interesting opportunity — it gives me a chance to reflect on the depth needed to learn a data set.

I talk about it quite a bit, but it’s something I’ve always done as part of my work and thus it happens without my paying strong attention to it. The fact that this data lives completely outside my realm of domain experience means it’ll be an extra challenge.

I’ll probably take at least one more stab at this and see where things wind up, and hopefully everyone can learn something along the way.

About this newsletter

I’m Randy Au, currently a Quantitative UX researcher, former data analyst, and general-purpose data and tech nerd. The Counting Stuff newsletter is a weekly data/tech blog about the less-than-sexy aspects about data science, UX research and tech. With occasional excursions into other fun topics.

All photos/drawings used are taken/created by Randy unless otherwise noted.

Supporting this newsletter:

This newsletter is free, share it with your friends without guilt! But if you like the content and want to send some love, here’s some options:

Tweet me - Comments and questions are always welcome, they often inspire new posts