Data science involves the use of domain knowledge. Whether it’s called subject matter expertise, or something else, our jobs would not be possible if we didn’t have knowledge about the data that we are analyzing. It’s the power that allows us to direct attention and resources to important issues, and helps us avoid dangerous pitfalls.

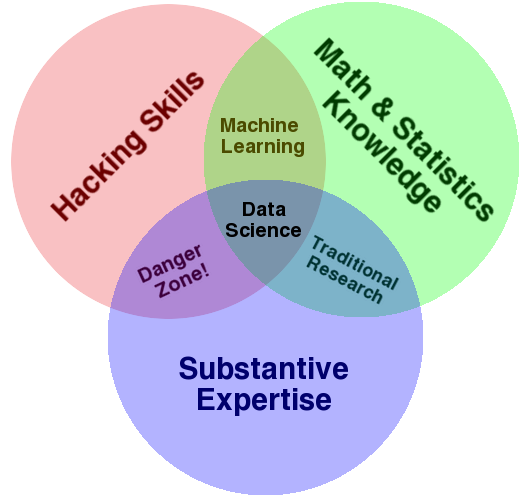

This property was recognized even way back in the days before the big data science boom, memorably encoded in Drew Conway’s famous data science venn diagram below as “Substantive Expertise”:

The famous data science venn diagram (src)

Drew’s definition of Substantive Expertise boils down to knowledge about a field. What are the relevant questions at hand, how to generate hypotheses, what’s already been done before, what’s the end goal, plus a whole endless host of other things. In modern industry DS practice, this very often translates into knowledge about the business and field, what’s important to stakeholders, what are the questions to ask, where is it all going.

Forget “Expert”, Just Think “Experience”

Subject Matter Experts are supposedly these magical creatures with knowledge, knowledge you need to do something. But the word expert implies that this person has hit an end state. They’ve “Become an expert” (cue background chorus. But if you read more and more about it, the bar is vague, somewhere between “they know some stuff you don’t” to “literally wrote the textbook on the topic”.

My problem with the whole concept is that it’s ill-defined and unrealistic. Very few people feel they’re an expert. It’s completely unsurprising to hear a world-recognized leading expert in a field say that they still have so much more to learn about their field. If they’re learning, there’s flat out no hope for me.

But the thing is, people outside of my skull apparently think I’m some kind of expert at something, despite me feeling like I’m always duct-taping things together. The only thing that I’ve done differently from those people is I’ve spent more time making mistakes in my field.

That’s literally my one differentiator—I’ve got a lot of Subject Matter Experience (in seeing things go horribly wrong…).

So This Happened Last Week…

This twitter thread was what triggered this week’s article. I was in a meeting, we had a tiny amount of telemetry (hah, such is Quant life), and I had lots of Opinions(tm). Normally, coming out of an analyst background, we’re trained to be very careful about the opinions we have. I didn’t even realize I had been speaking about opinions and possibilities until my coworker commented about how she was surprised I could read bunch of things out of 6 numbers and some ratios between them.

Opinions can flirt dangerously with accidentally drawing conclusions that are outside bounds of what your data can actually say. And as Cassie Kozyrkov says in her wonderful series about analytics, that’s a bad idea because drawing conclusions such as causality is in the realm of statistics and needs more rigorous tools than the analysis being done at hand. So there’s a need to be very careful about what gets said.

For example, playing with the 6 bits of data available (3 clicks and 3 unique users clicking), we saw were opening a sub-form 1.6 times on average. From the nature of that piece of UI and how it was intended to function, that seems oddly high. The UX Researcher I was working with also agreed that people probably shouldn’t be clicking the thing so much.

If I had been presented the same bit of data 10 years ago, when I had just started as analyst in tech, my most likely response would’ve been “people are opening this thing 1.6 times. I have no idea if this good.” A more experienced UXer or product person (i.e. people with subject matter expertise) would have to be the ones to say 1.6 is abnormal.

But now, based on seeing countless flows of various types, patterns have been drilled into my brain. We’d love the ratio to be exactly 1.0, but will realistically 1.1-1.2 clicks/user here is the best anyone could ever expect.

It was also interesting how the ratios of clicks/user changed deeper in, 1.6 to 1.3, to 1.2. Especially since the “hard work” was most likely AFTER step 2. You’d expect the multi-clicks to drop a ton on the step before the high-friction points, not 2 steps.

So we generated some hypotheses such as whether step 1 was “really scary” in some way and/or triggered a “must go read the manual now” kind of response. It’s also possible people were just exploring and shouldn’t have been starting this sub-task to begin with.

We were also able to come up with the most likely questions a stakeholder would be asking: “does this sign of friction actually stop people from completing the revenue-generating task that we want them to do?” Maybe this part of the flow is hard (but required), people struggle, learn, and eventually they figure it out and complete it. It’s less of an urgent issue if people eventually figure it out, compared to people leaving permanently because things are too hard.

Sadly, at the time we didn’t have the actual “finish the thing and generate some revenue!” data handy. But we knew that if we had it, it’d answer the most important questions and allow us to eliminate a bunch of our hypotheses one way or another. So that went onto the list of things we’re going to emphasize to stakeholders. Help us get this number (either locate it, or instrument) and we’ll tell you so much MORE about your product!

What Did Subject Matter Experience Get Us?

A strong belief (a prior if you will) that the clicks/user ratio was too high, indicating a potential issue existed

Lots and lots of hypotheses as to where and what the issue could be, and where UX Researchers can most likely look to validate the issues

Knowledge of what extra bits of data, even approximate data, would allow for many of the above hypotheses to be weeded out

Knowledge that if the results were presented as-is, where stakeholders are most likely to be interested

Probably other things that I’m not self-aware of

You’ll note that nothing of the above resembles a clear “THIS IS THE ANSWER!” Heck, there’s no answers anywhere, just preliminary findings and more work to be done. From a results standpoint, there’s very little that could be seen.

The main thing saved was time. Time saved in the form of a lot of meetings and dead ends avoided. Multiplied across the people-hours of a team of very expensive engineers, PMs, researchers, etc, it’s a HUGE savings.

The simple meeting and design question here doesn’t need special tools to solve, and these issues could be found out by any skill level with some discussion with the broader team. A new analyst without the experience would eventually arrive at most of these points given some feedback and extra legwork.

But the problem with all this is saving time this way is very hard to see from the outside, let alone measure. Trying to observe the effects of expertise saving time is about as hard as seeing the effects of an pyrotechnics expert not blowing themselves up while making a large firework. They’re obviously avoiding countless issues that would result in disaster, but we have no idea what’s been done.

Only similarly skilled expert would be able to appreciate the pitfalls avoided.

Quietness as a Source of Impostor Syndrome

Even the expert themselves are often unable to see their expertise in action in this way. That probably doesn’t help with impostor syndrome.

An experienced data scientist would never think to use a simple (uncorrected) t-test to compare 250 pairs of results at p<0.5. That’s an obviously gross example of p-hacking. It’d be as unthinkable to do that as it would be to attempt to pick up a glowing hot piece of metal. You just don’t. The response is so hard-wired, so self-evident, why would anyone even consider it? It’s only when they’re confronted by someone actually doing the unthinkable, horrible act that they even realize that what they see as obvious isn’t widely known.

A friend of mine once commented to me about the tech talks that she gives around leading and managing QA teams. She mentioned how some of the things that get the biggest audience response were things that were very simple and obvious to us. Things like “your QA team should communicate and work together with the engineering team, it avoids issues.” That may sound obvious to us since we likely had to untangle issues between QA and Eng and Data, but for people embedded in different organizations with different management styles and histories, it might be something they have never heard about even in 2020.

So How Would I Know if I’ve Got Subject Matter Experience?

For the most part, you won’t. If overcoming impostor syndrome was as simple as taking a breath and a bit of introspection and looking back, it wouldn’t be such a prevalent issue among people. It’s probably better if we didn’t know since that sort of thinking often leads into over-estimating our own abilities.

But every failure, every gory explosion, every tiny success, will soak into you brain if you let it. It will never crystallize into expert-ness, but on by virtue of wanting to avoid painful experiences of the past, you’ll have opinions about what to do in the future.

The trick is to then leverage those experiences and apply them to the present. Sometimes it will work, sometimes it will fail because past performance doesn’t indicate future returns. Either way, that knowledge feeds on itself, until one day you’ve made more mistakes in the field than most other people. Then, at least according to Niels Bohr (supposedly, I can’t find a good citation for this quote =\ ), you’ve become an expect.

That’s probably all we can hope for.