Earlier last week I was seriously thinking about discussing how data people need to be very cautious about what they’re saying in public about COVID-19. There is a lot of contextless data and charts bouncing around that acts like a magnet to us data folk. The temptation is high to make some charts we would do at work, come to some conclusions that are unrealistic and accidentally cause more harm than good by spreading distrust in official sources from experts in the field (just look at the complexity of their model parameters on page 4).

An example of one such case is in this twitter thread here:

Sidebar: That’s not to say data scientists and engineers have a monopoly of exercising questionable judgement around COVID-19. I’ve asked around for examples from people familiar w/ virology and epidemiology for some crazy things they’ve seen and got links to a couple of MDs and even epidemologist-turned-pundits that were apparently saying incorrect things way out of their fields of expertise. But I’m in over my head and can’t evaluate the firehose of tweets those people generate for quality, so I’m refraining from linking to anything directly here.

Meanwhile, I came across Amanda Makulec’s post about 10 things to consider before making a COVID-19 chart that seems quite reasonable to my inexpert eyes as a guideline to not totally step in it when messing with COVID-19 data.

Getting back to data science, the more I thought about the whole situation, the more I grew curious as to why exactly do we have this compulsion to begin with?

Most of us, I would imagine, wouldn’t think that our naive laptop weather models could uncover some hidden truth that professional meterologists with supercomputers haven’t figured out. Why would we think we could project the spread of a disease better than people who have spent entire careers thinking about the subject? It’s easy to shout about Dunning-Kruger, the hubris of ${field}, or naivete, but that isn’t quite satisfying. Many of these people are neither stupid, nor malicious, so why?

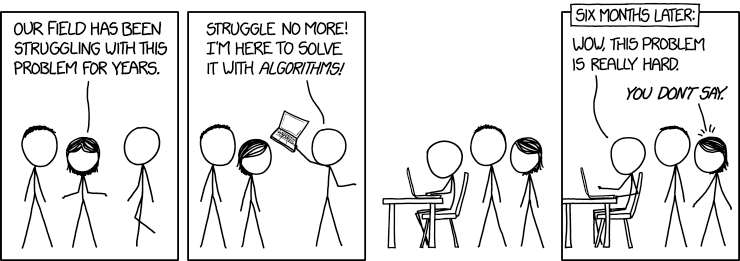

Evergreen xkcd comic: “I’m here to help”

Then it occurred to me, I’m literally paid to do the same sort of high-turnaround atheoretic analysis in my daily work life that is causing these issues. Sometimes, my naive methods work surprisingly well, but even if they don’t, I’m rewarded if I get things wrong but iterate to eventually get things right(ish). This is essentially the reverse of how proper science is done. There’s strong pressure to HIDE null results which causes the the File-Drawer Problem.

The problem is within the world we’ve built around ourselves.

In industry, there is a bias towards action, not correctness

Opportunity costs are very real in industry. Decisions are made under conditions of uncertainty and competition, because nothing is ever guaranteed. One of the most important things that people coming out of school have to learn is how to judge when work is “Good enough” to be used in a business decision. It is a very different standard of rigor than what is demanded in an academic situation.

Since a decision will be made within a certain period of time, whether data is available or not, the role of a data practitioner is to provide as much data-driven guidance as possible given the constraints. It is accepted that the information provided will be incomplete, with the understanding that anything to help increase the odds of success is better than nothing.

Sure, we can advocate for waiting to collect more data, and there are definitely times when that is the correct decision to make. But when a deal absolutely needs to be signed within the week, or there’s an emergency, waiting will not be an option.

An example

So imagine that you’re working at an e-commerce site selling widgets. One day, you notice that there is an unusual amount of orders. They’re all coming from a state that doesn’t typically order many widgets. From previous experience with sales patterns, it smells fishy enough to seem like fraud of some sort, though you’re not sure why. What do you do?

Most people in that scenario would gather data on these suspicious looking orders,, looking for patterns. Are they all coming from a small handful of IPs? Is it all going to the same set of addresses? Is there some kind of external event that somehow is driving demand for widgets (like a pandemic scare or celebrity endorsement?) Did the marketing team buy a TV ad in the region?

With those data points in hand, you need to come to a convincing case for management as to what to do. Do you decide that it’s not fraud and accept the risk of shipping product out (that may be stolen), and the orders have their funds charged back? Or do you declare that it’s fraud and all orders need to be canceled, maybe even the whole country temporarily blocked? You need to decide quickly because those orders would normally ship within 24 hours unless stopped at which point your options for avoiding damage become extremely limited. The amount of money at risk is mounting with every minute.

If I were in such a situation, I’d very likely advocate for freezing all the suspicious orders quickly, buying time for further investigation without putting the company at further risk. In most situations, it’d take a line graph or two, a few descriptions of who’s making the orders, and upper management would easily agree with such a decision. Over the years, I’ve gotten very good at using data to communicate these sorts of arguments to decision makers in a way that will get their attention.

Take a look at the decision’s risk profile. The risk of letting the orders through is much greater than the risk of inconveniencing customers should the orders be legitimate. Legitimate buyers would likely come back later if there was an issue with their order, fraud is unlikely to do the same. Management agrees with the initial analysis and locks down everyone in that entire state that bears a similar profile temporarily.

If upon further investigation we find out that it was actually fraud (because some slipped through), I look like a hero that prevented a disaster. “Stopped $X worth of fraud!” and all that.

If we find out that I was wrong and had rejected good orders, the company instant shifts into course correction and damage control. We issue a public statement, perhaps offer a discount to reclaim the lost customers. It’s unlikely that I’m even blamed for a failure because, given the information at the time, everyone agreed it was the correct decision.

The only real way I would have been called a failure would have involved me making gross errors or omissions in analysis and reporting, bordering on negligence.

The bar is extremely low.

Failure is often not a big deal

Thanks to this asymmetry in reward structures, I’m conditioned to have a bias towards acting in certain ways. After doing things this way for over a decade, it’s practically automatic.

I’m not working in an industry where mistakes can cost lives or billions of dollars. In the grand scheme of things, my failures “don’t matter”. The cost is relatively low and failure is a part of the “fail fast, learn, iterate” culture of tech. It’s expected, and I’m largely shielded by that fact. Obviously I’d prefer to have positive results as often as possible, and I’m honestly trying to do the right thing, but my downside is very low.

Most importantly, the whole process has a ratcheting mechanism where a change that has visible negative effects can be rolled back, while positive effects are kept. Having access to an undo button means you can be pretty darned reckless. I’m sure you can see how this doesn’t translate well into situations that do not come with undo buttons, like emergency pandemic communication.

Theory? Of What?

Industry work is (usually) very atheoretical in nature. While there’s overarching themes of “best practices”, like “emails with these properties have good response rates”, or “human faces tend to draw user attention”, it rarely gets refined to the point of being a rigorous theory in the scientific sense. We don’t typically operate at the level of seeking a resilient, generalizable, truth. We’re simply interested in figuring out what works for the situation at hand.

The benefit of this way of doing things is that you can keep pace with changing conditions. There might not BE a magical generalized theory of good emails or registration pages. It may change over time. Best practices evolve over time.

Being atheoretical means we often tackle a broad spectrum of problems with a similar set of tools that are general in nature. For example, linear regression works practically everywhere and gives a reasonable result, especially when you’re going into a problem space blind. Interesting things can also happen when applying methods across fields.

The problem with this way of doing things is that, without worrying about articulating the theoretical concepts, it’s highly unlikely that we’ve actually sat down and thought through and explored all the factors that go into the problem. It means our models can’t account for many error-generating factors. So we wind up using relatively simplistic models because anything more complex gets thrown off too much.

When you work under such conditions for a long time, it becomes very easy to think that simplistic models “are good enough” for every problem, instead of a certain set of problems. This may be fine for starting a Kaggle competition, but it doesn’t work when you wade into a field that does have theory and refined models that have been reviewed and field tested by many scientists over decades.

So wait, what’s the harm here?

You might ask, where’s the harm? Worst case, we’re making the public aware of the danger of the situation, right? Why is that bad when everyone is so behind right now? We need to act NOW!

As I sit at my desk, watching the fumbled response to COVID-19 in the US with increasing dread, I’m not unsympathetic to this argument. But even so, I think it’s dangerous to just throw stuff out there. Here’s why.

I’ve been flipping through various emergency communication handbooks lately, mostly by governmental agencies such as WHO and FEMA, to get a feel for what all the emergency management officials are trying to do. A large part of their guidelines is centered on how the people in charge of handling the emergency need to build and maintain trust with the community via effective communication so that when the agencies try to tell the public to act in a certain way, the public is willing to listen.

If the public is sufficiently receptive to communications and have been educated about the situation, then it’s more likely that the public’s actions and managing agency’s goals are aligned. That would be considered a good thing. We want people to wash their hands and avoid large gatherings of people right now, but we need the cooperation of people to do so.

This is where the damage from people making irresponsible statements comes in. It’s not because of “fear-mongering” or “spreading panic”, but because it erodes public trust in the experts, be they governmental, or medical, who are trying to get people to change their behavior for the common good.

Trust is very easily lost, and very difficult to earn, especially in situations involving risk of death. So the effects of erosion tends to go in a bad way. There are no undo buttons on this either. Once a rumor starts, it can get out of control very quickly in this day and age.

Data and visualizations are especially dangerous in this climate because people attribute weight and credibility to colorful pictures and numbers. Even if the numbers are complete nonsense, the mere presence of numbers and a scary chart in red going up and to the right labeled “infected” makes it harder for people to discount.

Here’s a cute 2016 paper named “Blinded by Science” by Aner Tal and Brian Wansink where (in study 1 of 3) 61 participants in a Mechanical Turk-based study found an ad for a drug to be more convincing when it had a graph that added no new information compared to the ad text. While pure text convinced 2/3rds of participants that the drug was effective, all but one of the people who saw the graph thought the drug was effective.

Thanks to the magical halo of science, we literally have a cheat code into people’s information processing. This can be used for either good and evil.

Please be careful with this power.

I’d also like to take a moment to remind you that Drinks and Data Rant, aka #datarant, is happening this Tuesday, 2020-03-17, at 9pm EDT. Details are here if you’re interested in joining a bunch of data nerds to voice/video chat with each other about data