To the surprise of no one, I have a quirky habit that doesn’t quite amount to a hobby. Every so often I’ll browse tools (this isn’t the quirky part), and I’ll spend quite a bit of extra time looking at measurement devices (this is the quirky part). Rulers, scales, meters, micrometers, gauges, etc..

One of the reasons is because measuring tools are specialized to their unique fields. The measuring tools used in a wood shop, versus a machine shop, versus a jeweler’s bench, can vary pretty wildly. They’re all suited to very specific needs and standards of their industry. So looking at these unique artifacts can tell you more about a field, just by seeing what they care or don’t care about.

Woodworkers don’t measure their work down to the microns, because wood moves with humidity significantly more than that. Things are typically specified in fractional inches or millimeters. Machinists will typically work down to micron scale, and then on top of that, often have to consider things like how thermal expansion affects their parts measurements.

Meanwhile, high energy physicists generate so much data per second of experiment measurement, they’ve resorted to pre-filtering their data just to get it to a form that could be reasonable stored for analysis. I find it terrifying that they’re starting their interesting research work resting upon a destructive data pipeline. But then I’m no particle physicist and most definitely do work with less rigor than them. (CERN apparently released a bunch of code as open source from the ALTAS experiment that’s part of that pre-filter and it’s available on Gitlab now. I can’t make head or tails of the code, it’s way over my head.)

As a quantitative UX researcher, or a data scientist, I think one of the primary functions of my job is to measure things. Since I work in internet tech, I’m primarily measuring intangible things, number of views on a page, number of clicks on a button. They could be concepts of dreamed up and defined in my own mind, like “the number of users who clicked this exact sequence of buttons, which I’m calling the “buy my notebooks” task”.

Since I’m measuring conceptual things, I fall into the habit of being relatively careful about communicating definitions of measurements, so that someone else (including my future self) can reproduce my work. This is a good habit to get into, but due to the sketchy, lossy nature of data and telemetry on the internet in general, I’m positive that there’s quite a bit of measurement error involved despite any care I take.

The problem with what I tend to do is that I don’t have a good idea of what the measurement errors are for most of my measurements. I’m not sure we as an industry does either. We all know that reading telemetry generated from javascript can be affected by all sorts of things, user behavior, user browser settings, network issues, server issues, etc. But I’ve never seen any source that even tries to give an estimate of how much error that is. I’m not sure if it’s possible.

Getting back to how you can get a sense of what a field deems important by looking at their measurement tools and applying some introspection… it appears that data science as an emerging field, especially within the internet/tech areas, is primarily focused upon getting AN answer to questions. We want an answer, almost any plausible and reasoned answer will do, even when there are could be massively significant hurdles in data quality and analysis rigor.

I don’t mean this in a derisive way. Things are this way because, in an unforgivingly uncertain landscape, even if we’re only right slightly better than chance, that’s still better than flying completely blind. Given the constraints we work under, I don’t believe there’s much issue with that state of the world and I gleefully participate in the shifting complexity at times. But you have to admit that in a vacuum, it’s… a pretty impressive epistemological position to take.

Since I float in a sea of unknowable uncertainty, I’m fascinated by how measurement happens in other fields, especially with real objects. It’s an extremely deep and involved field that humans have been working on for millennia.

Metrology, the field civilization depends on that you probably never heard of

Metrology, the science and study of measurement, has a couple of aspects to it.

First, it’s defining units, such as defining what a meter is — currently defined as “The length of the path travelled by light in a vacuum during a time interval of 1/299792458 of a second.”

Next, it’s realizing the unit, that is, converting the definition of a unit into an actual real thing. For example, the length measurement above could be realized by building a machine that measures how far light travels in 1/299792458 of a second. Other times, it could be as simple as declaring that “the kilogram is exactly this object” (this was true until 2020 when the kilogram was redefined, super fascinating stuff I can’t get into today).

Finally, the third big aspect deals with taking these ultra-refined measurement definitions and realizations that only exist in advanced labs, and bringing them into standards and practical use around the world. This is where the field touches practically every aspect of our lives.

Every measuring device that we can purchase today, from extremely expensive precision instruments, to the dodgiest, most inaccurate, can theoretically trace its measurement scale back to an official national measurement standard. The poor devices are just more likely to disagree with the standards.

The science and tech behind defining and realizing units is super cool, but I don’t know nearly enough about the field to speak to it very much. I just marvel at explanations of that stuff.

So today, I’m going to explore more about the last big aspect, the transmission of measurement standards to outside of the measurement lab, and into the rest of the world.

Transmitting lengths

Since every measurement has its own definition, realization, and standards, I can’t possibly talk about everything in the general. So I’m going to focus on one of the most relevant measurements in daily life, lengths.

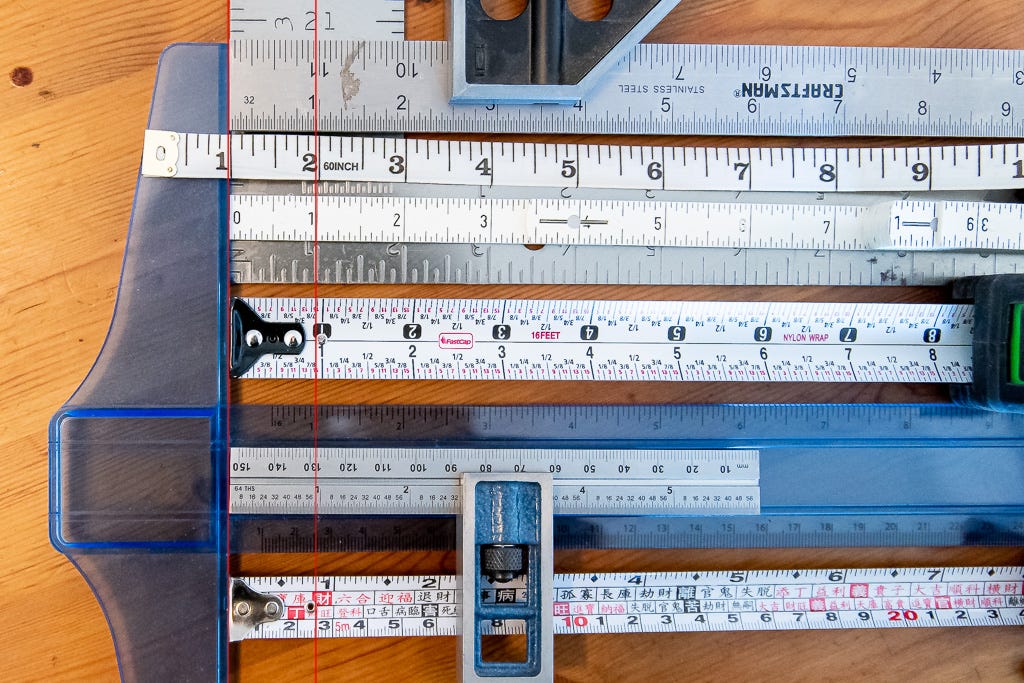

Pick up any ruler you have lying around and it’s likely got some combination of inches and centimeters on it. Normally we take it on faith that the inch marked on that ruler is an accurate representation of an inch. But is it? How would we know? Does it matter?

In common usage, the accuracy might not mean very much. When we measure our room to figure out if the new couch will fit, it doesn’t matter if our measurement is off by a couple of inches. We rarely have furniture packed to sub-inch space tolerances, so we honestly don’t care if the mark on the ruler is inexact by even a pretty large amount. Even in woodworking, due to some inherent flexing in the wood, you’d rarely measure more accurately than perhaps 1/32nd of an inch, and very often things can be looser than that. Anything more accurate would be done using methods that don’t require use of a length scale, instead relying on methods that transfer arbitrary lengths and widths directly.

Meanwhile, if you’re machining metal parts, measurements and tolerances are often discussed in thousandths of an inch and micrometers (and fractions thereof). If things are a few thousandths of an inch out of spec, that could cause equipment failure.

For example, this site w/ engine specs for some model of Honda Civic specifies the engine piston diameter spec as 2.9520" - 2.9524" (74.98 mm - 74.99 mm). It’s allowed to be in a range of 0.0004 inches (0.01mm) for it to be considered normal. Go past those tolerances and all the promises of functionality, performance, and longevity start to out the window. Usually this is when your engine starts burning oil, spewing smoke, or losing power.

So how does the person servicing your car engine for a problem check if your pistons are in spec? They measure them. But how does the service person know that their ruler is measuring the same result as Honda’s rulers? Especially since they have to agree to significantly better than 4-thousandths of an inch to detect that the engine is off spec.

This is the problem if traceability and transmission of length standards. Hopefully you can see how important it is. If we didn’t have this it’s hard to see how we could have modern technology, with all its tight tolerances and interchangeable parts. Practically everyone in modern society relies on this stuff.

It’s such an important problem that governments set up national, and international, standards bodies handle such issues. The main reason that governments care so much about this is because of the same things all governments care about — war (weapons parts from many factories need to all be within specifications) and money (because industry and trade needs good measures too, which means taxes).

In the US we have NIST, other countries have their own similar bodies. Internationally, the SI system of units is under the International Bureau of Weights and Measures created under the Metre Convention.

And if you’re wondering if having an incorrect cheap ruler can happen? Yup. Especially with small cheap plastic rulers, with examples like Metrology experts looking into cheap plastic ruler accuracy, and jeweler’s making manufacturing defects. Also, note that on tape measures, the first inch is off by 1/16th to account for the thickness of the metal nail grab that wiggles on the end (the wiggling is intentional and lets you account for the missing 1/16th when pulling or pushing the edge against objects).

So how is length often transmitted in practice?

It depends!

The only way to really know how good your measuring device is would be to compare it to another, hopefully more accurate, measuring device. Your $1 school ruler could be compared against a high accuracy micrometer to see how much they agree or disagree. The micrometer could say “Your ruler’s inch is within 0.03 inches of my inch.”

That micrometer could compared to the known length of a set of highly accurate gage blocks. Those gage blocks in turn were manufactured and compared to a set of extremely accurate gage blocks at the manufacturer. Finally, the manufacturer has likely gone through the time and expense to send their master gage blocks out to their local national standards body, like NIST, to compare against whatever high-tech measuring device they use there. That standards body effectively is helping compare against the realized version of the defined length standard. They can certify that the manufacturer’s reference blocks are within some error value of the national standard, whatever that is.

So there’s this chain of checks going on, where one measurement’s accuracy is resting upon the (better) measurement accuracy of another device, until it traces all the way back to the actual standard. For spiffy high precision gear, you can pay extra money to get certificates from NIST and other standards bodies certifying your tool comes within tolerance to official standards.

Each step away from the standard obviously entails more and more measurement error, since there’s more handling, different tools, laxer rules, etc. For example, the manufacturer of the cheapo $1 wood ruler probably relied on some reference scale to make the stamping/burning device that stamps the scale onto the wood blanks, but the stamp has been worn with years of use and the owner doesn’t care enough to replace it.

Gage blocks are super cool!

While doing lots of reading and video watching preparing to write this, there was one measuring device that I was sorely tempted to get for taking a photo, except it would cost too much to ever justify — a set of gage blocks, also spelled as gauge blocks.

They’re a set of blocks, often made of some metal hard-wearing metal or ceramic, that are machined with extreme precision in various sizes. The trick to using them is that you can take a stack of gage blocks and build just about any length you want, allowing you to make reference lengths. The extremely flat surfaces of the blocks also allows you to “wring” them together by pressing them tightly, and the blocks will stick to each other.

Gage blocks are probably THE practical way that manufacturers of anything make sure that their measurements are very close to the “true” standard lengths. What’s amazing is that they were invented over 100 years ago by Carl Edvard Johansson in the late 1800s and they exist in largely the same form today because few people could come up with much improvements to the design.

They’re manufactured so that they are accurate down to +/- 0.2 micrometers across the entire surface of the smallest blocks (Gage block error standards generally scale with the size of the block). At this level of accuracy, thermal expansion of materials becomes an important factor.

Mitutoyo, a big manufacturer of measuring devices, has a small booklet about the history of gage blocks that’s well worth reading the first 8 or so pages of. I’ll quote my favorite excerpt from pp 7-8:

At that time [1911] the U.K. and U.S. differed slightly in the definition of the inch, as well as the standard temperature at which it was measured.

The U.K. worked to a length standard known as the Imperial Standard Yard (36 inches) and defined the reference temperature as 62 degrees Fahrenheit (16.67°C). One inch in the U.K. was precisely 25.399977mm, based on comparison with the international standard meter. On the other hand, the U.S. defined one inch as 25.4000508mm at 68 degrees Fahrenheit (20°C) (congressionally determined in 1866), which amounted to a difference of less than 3 parts per million.

Johansson actually manufactured inch gauge blocks assuming 1 inch was exactly equivalent to 25.4mm at a temperature of 20°C. In later years when the inch definition between the U.S. and U.K. was unified to agree with this definition, Johansson's gauge blocks had penetrated widely in the weapons industry, which then could not help but conform to Johansson's definition. As a result, Johansson might be seen as the originator of the current definition of the inch.

Thanks for joining me on this little side journey this week! See you next week!

About this newsletter

I’m Randy Au, currently a quantitative UX researcher, former data analyst, and general-purpose data and tech nerd. The Counting Stuff newsletter is a weekly data/tech blog about the less-than-sexy aspects about data science, UX research and tech. With occasional excursions into other fun topics.

Comments and questions are always welcome, they often give me inspiration for new posts. Tweet me. Always feel free to share these free newsletter posts with others.

All photos/drawings used are taken/created by Randy unless otherwise noted.

Some Counting Stuff swag from RedBubble is available for print-on-demand here.

Reference Materials, extra reading

Along the way, I found a Youtube playlist introducing the basics of metrology produced by Mitutoyo. The first few are the basics of practical applied metrology, and worth your time. After that it slowly descends into practical issues like calibrating equipment which probably isn’t worth your time. The gage block one is linked below.

Here’s an interesting publication from someone at NIST themselves that argues that gage blocks are zombie technology that should be replaced by other tech that allows extremely high accuracy measurements over longer ranges.